2026-04 - W1

Why Individual AI Adoption Doesn’t Become Organizational Capability

What matters is developing your own feel for when AI adds real value versus when it’s just generating plausible filler.

Interesting post on AI adoption, although I’m not that convinced about the following claim:

The cost of choosing wrong is real, but it’s recoverable. The cost of choosing nothing is falling behind while feeling responsible about it.

Lots of people are claiming “You will be left behind”. I don’t understand why they are using this kind of argument to make people adopt ai. It has the same kind of vibe as “Us vs Them”. Same as the current political landscape, where opponents are more into “They are wrong”, instead of “We can improve things”. Same for ai CEOs that told people that software engineers will lose their job, or that it takes lot of energy to train a human… I can’t believe they hold this kind of statements to prove their arguments. I guess fear does pay.

How we sped up bun by 100x

Another article that leverage ai to use, I think, an autosearch approach to recreate a tool.

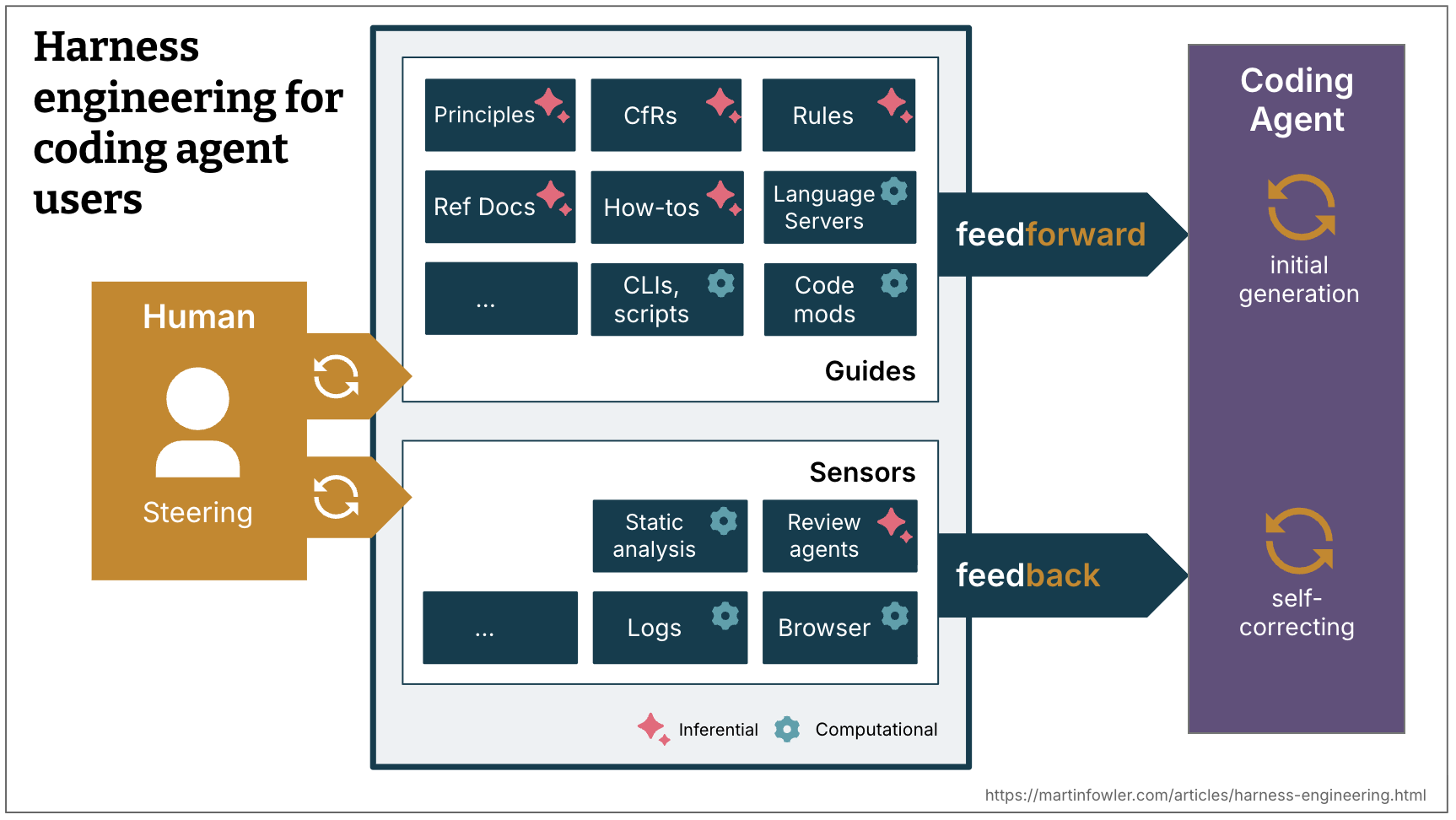

Harness engineering for coding agent users

Again, nice article from Thoughtworks, adding definitions and structure to what’s happening in the ai world.

2603.22106 From Technical Debt to Cognitive and Intent Debt: Rethinking Software Health in the Age of AI

Interesting paper. I was aware of the first two “debts” (technical and cognitive). The 3rd one is a usual concept and worth thinking about.

This article proposes a Triple Debt Model for reasoning about software health built around three interacting debt types: technical debt in code, cognitive debt in people, and intent debt in externalized knowledge. Cognitive debt concerns what people understand; intent debt concerns what is explicitly captured for humans and machines to use.

Agent responsibly - Vercel

There is a fundamental difference between relying on AI and leveraging it.

- Relying means assuming that if the agent wrote it and the tests pass, it’s ready to ship. The author never builds a mental model of the change. The result is massive PRs full of hidden assumptions that are impossible to review because neither the author nor the reviewer has a clear picture of what the code actually does.

- Leveraging means using agents to iterate quickly while maintaining complete ownership of the output. You know exactly how the code behaves under load. You understand the associated risks. You’re comfortable owning them.

Home Maker: Declare Your Dev Tools in a Makefile

Simple solution to manage your dev tools, by using something every unix system has: make.

I’m quite tempted to want to use this, but I’m already full deep into nix… Fallacy bias kicking!!!